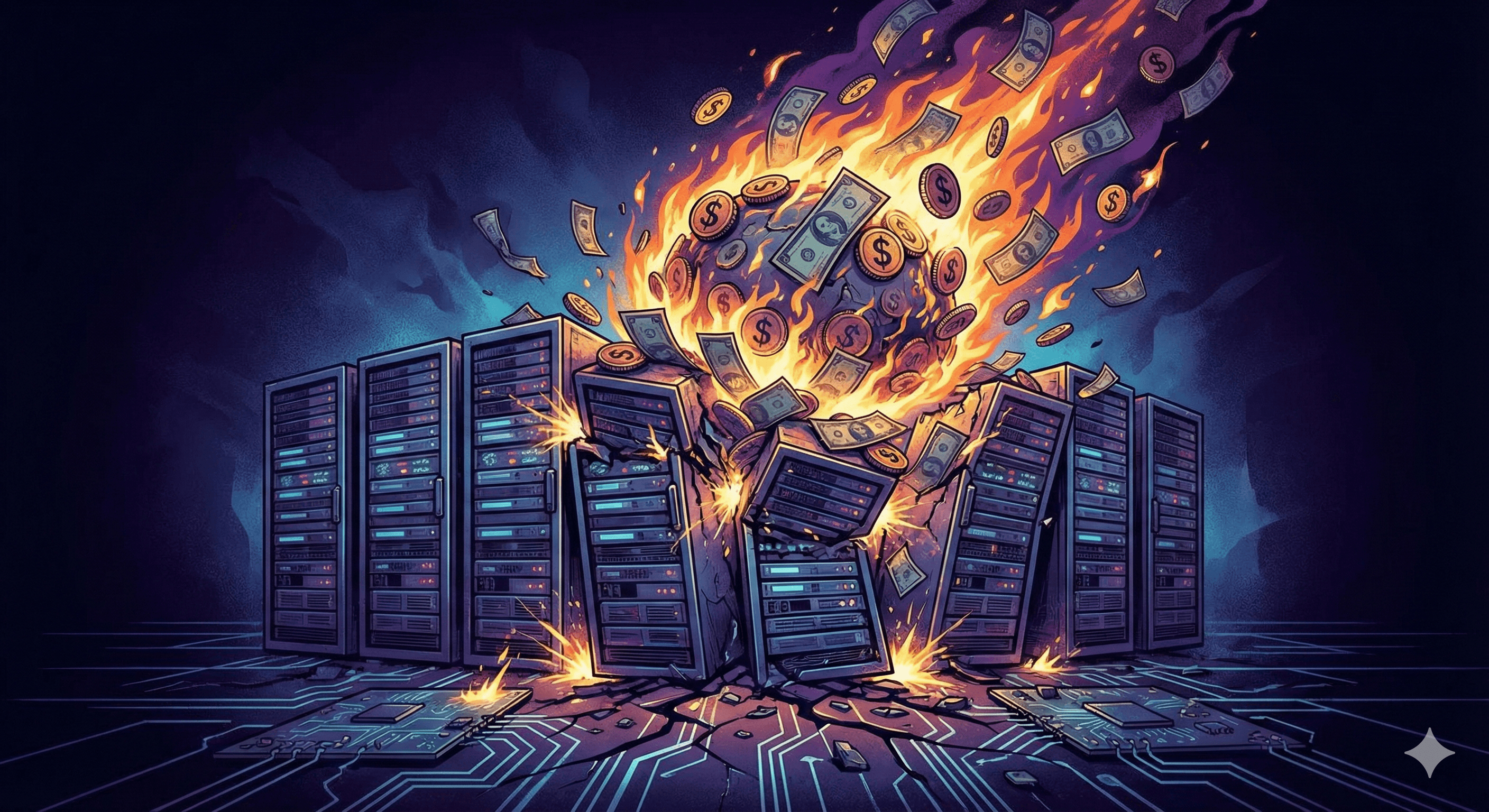

We work with companies migrating from traditional data warehouses. When we analyze their query costs, we see the same number over and over.

$50 per query.

Not a complex multi-hour analysis. Not a massive data migration. One query. Fifty dollars.

That's like paying $50 every time you Google something.

Nobody talks about it because nobody wants to admit how expensive it is.

See For Yourself

Pull up any Snowflake, Databricks, or BigQuery bill. Look for a line item called "compute" or "analysis" or "warehouse credits." Now divide by the number of queries run.

For a typical company with 20TB of data, the math is brutal:

Assume $10/TB of data scanned

90% of your queries use your partition key(s)

Partitioned queries average 2% of the dataset

Those queries cost $0.40 each - that seems fine!

Unpartitioned queries hit the entire dataset

They cost $200 each - um, what?

Executing 10,000 queries per month = $3,600 (Partitioned) + $200,000 (Unpartitioned)

The Unpartitioned queries are the only part that matters - and they are absolutely killing you!

This is an optimistic scenario!

We've seen this at media companies, ad agencies, pharmaceutical firms. Same story everywhere.

Data Emperors with No Clothes

Why doesn't anyone talk about this?

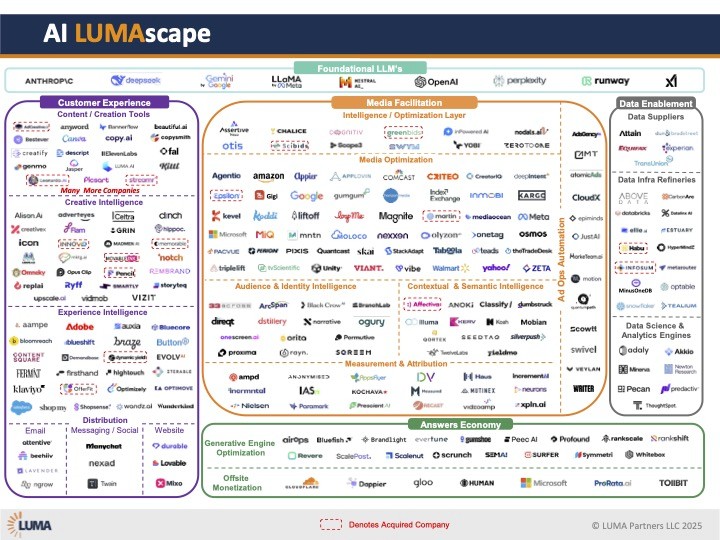

The Vendors Don't Want You to Notice They bury it in "credits" and "compute units" and "slot hours." They never say "hey, queries cost $50 each." They say "optimize your cluster size" and "use materialized views" and "implement caching strategies."

Anything to avoid the real conversation.

That running a simple GROUP BY costs more than the analyst's hourly rate (an entire hour of compute can cost THOUSANDS of dollars, depending on the platform and the job).

Your Finance Team Doesn't Understand It They see a line item for "data infrastructure." They don't see that it's really a tax on thinking. They don't realize that every dashboard refresh, every ad-hoc analysis, every "let me just check something" is a $50 transaction.

It's the perfect crime. Hidden in plain sight.

Your Engineering Team … Doesn’t Care

Most engineering organizations we speak to are neither responsible for solving the business need nor for managing the cost of the deployed solution. By the time this lands on their desk the most common solution is to just run fewer queries - to do less thinking.

Actually Answering Questions Compounds the Problem

Finding answers in data requires poking around. You won’t know what you are looking for until you find it so you just have to try a bunch of stuff. That means potentially executing a lot of queries. Answering a single question about the business will frequently require executing 10 queries, 20 queries or more. This is just a routine answer to a single business question that could easily cost $1000. Real research requires hundreds, if not thousands, of queries. In practice the expense is so prohibitive that people often just don’t use their data warehouse for anything interesting.

Instead, teams learn to run the absolute minimum number of queries. They guess instead of check. They argue opinions instead of testing hypotheses. Multi-million-dollar decisions are limited by thousand-dollar data budgets.

This acts as a curiosity kill switch for your business.

Data Warehouses Scale. Their Query Economics Do Not.

We recently worked with a large advertising agency. They wanted to let their teams explore their data more freely. The costs to do that with a data warehouse are fairly simple to project:

Year One: You let 100 analysts actually explore data. Just 100 or so queries per month each. Nothing crazy. 10,000 queries/month × $50 = $500k/month

Year Two: You build some basic AI agents to help with optimization. They run scenarios for your top clients. 100,000 queries/month × $50 = $5M/month

Year Three: Your agents get good. Really good. They continuously optimize everything. 500,000 queries/month × $50 = $25M/month

That would be $300 million a year. To think.

Why This Won't Change…

Here's what’s driving this insanity: the leading data warehouse vendors built their business around a “razors and blades” model. They take a loss on the price of the razors (data storage, at rest), and then profit hand over fist on the blades (compute).

This is a side effect of the architecture of these warehouses, separating storage and compute. This is great for intermittent use - the storage is cheap, and you only pay for the queries you run. To make this work though, massive compute clusters are spun up to scan the data for each query you execute. It's like starting a jet engine to blow out a candle.

There are probably things that could be done to make this process more efficient. But remember, these companies make 90% of their revenue from compute. More compute = more revenue. Reducing the amount of compute they use to run queries would lead directly to less revenue. Their entire business model would collapse!

So they add features. They talk about "lakehouse architecture" and "unified analytics" and "AI-native platforms." They optimize around the edges - better caching, smart partitioning, bigger clusters.

…Until It Does

What if we built differently? What if we optimized for query speed and cost rather than storage?

Then everything changes.

Those 100 analysts can explore freely. Your AI agents can run thousands or millions of queries. You can test hypotheses instead of protecting your query budget.

What Questions Would You Ask at Zero Incremental Cost?

Imagine that queries are free. Not cheap. Free.

Every campaign gets 1,000 optimization passes, not 3. Every employee gets direct data access, not pre-computed dashboards. Every hunch gets tested. Every assumption gets verified. Every guess can be checked.

That's not a 10x improvement. That's a completely different way of doing business.

The Choice

Every revolution starts the same way. Something new comes along. Companies do the math. The last generation's "best practices" become the next generation's cautionary tales.

Your competitors are figuring this out now. They’re not smarter than you - they just aren’t paying a tax to think anymore. Are you next?